Seminar Series

Seminars

Our seminar series brings together researchers working on graphs, machine learning, network science, and related areas. It aims to foster interactions across complementary research directions, from geometry and mathematical modeling to data-driven and statistical approaches, encouraging the emergence of research collaborations.

Browse past and upcoming talks, discover the speakers, and access slides and videos.

Watch the full playlist: NGML Seminar Series Playlist

Interested in attending the live seminars? Please contact antonio.longa@uit.no

Sparse polynomial approximation for surrogate modeling on networks

Linear approximation methods often struggle to efficiently capture complex, high-dimensional functions, as they require a rigid, uniform increase in degrees of freedom to improve accuracy. To overcome this, nonlinear approximation has emerged as a powerful paradigm. By dynamically selecting only the most significant basis functions, it achieves optimal compression rates and dramatically reduces computational costs. Among these tools, sparse polynomials offer a highly effective framework, leveraging adaptive selection strategies to accurately represent intricate systems using only a small subset of non-zero coefficients. While sparse polynomials have found massive success in classical applications like uncertainty quantification and parametric differential equations, their extension to network-structured data is a rapidly evolving frontier. In this seminar, we will review the core mechanics of sparse polynomials as a nonlinear approximation tool and explore their recent deployment in modeling diffusion processes on graphs. Finally, we will present a variety of numerical test cases demonstrating how sparse polynomials perform across different network models and topologies. Through these diverse examples, we will discuss the practical utility, scalability, and unique challenges of using sparse polynomials to capture complex dynamics on graphs.

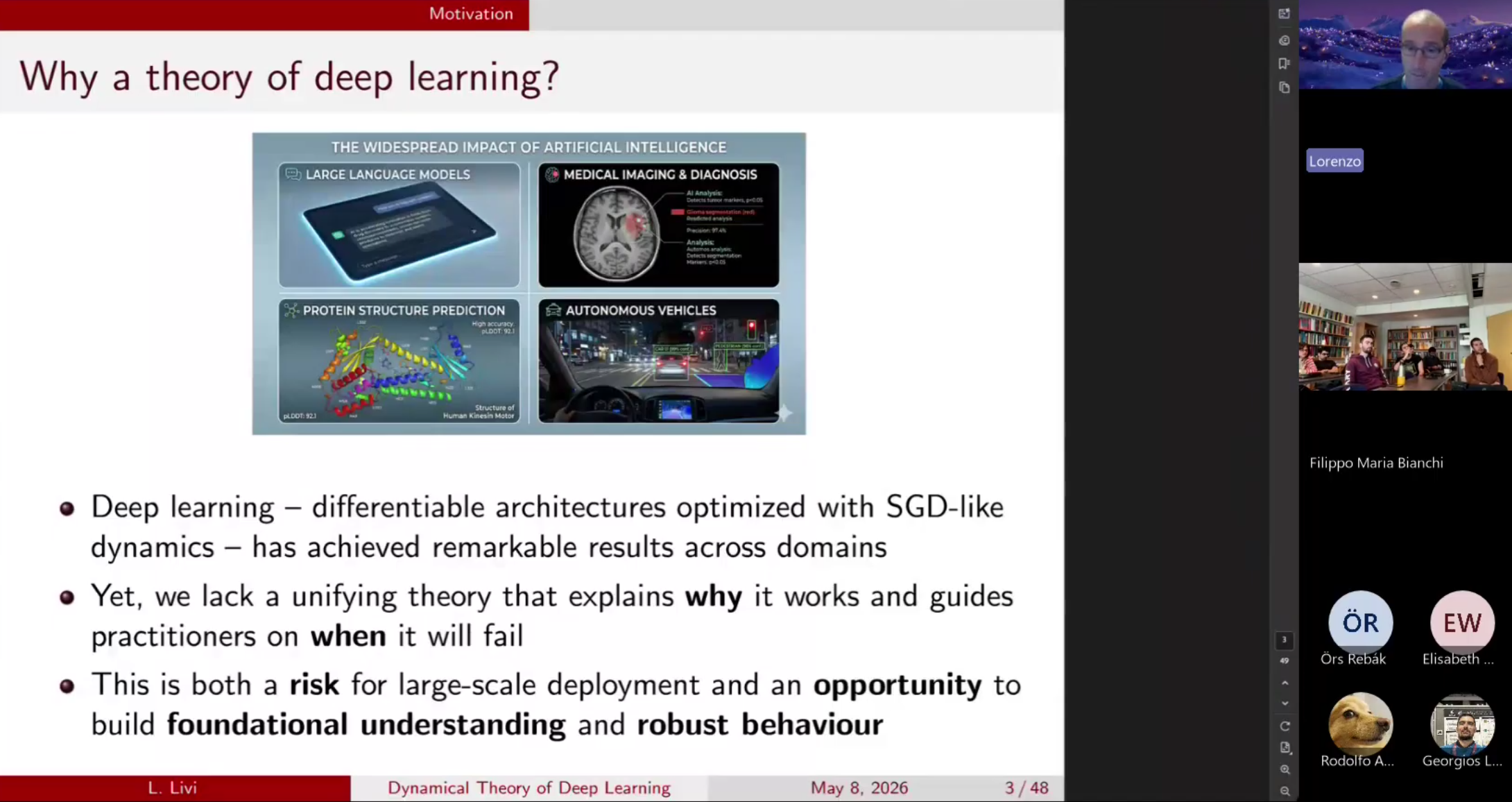

Toward a Dynamical Theory of Deep Learning: Coupled State-Parameter Dynamics and Time-Scale Interaction

Modern deep learning systems exhibit complex dynamical behavior, yet a principled theory explaining how these dynamics shape learning and memory is still missing. This talk presents a research program toward a dynamical theory of deep learning based on the interaction between state and parameter dynamics in recurrent neural networks. I show how gating mechanisms induce lag-dependent effective learning rates that constrain temporal credit assignment, and introduce a learnability theory characterizing the maximum temporal horizon at which gradient signals remain detectable under heavy-tailed stochastic gradients. Finally, I discuss recent results linking these learning limits to the distribution of neuron-wise time scales emerging during training, whose geometry determines the temporal reach of learning.

Geometry of neural networks

This seminar offers an overview of recent advancements in the geometric aspects of neural networks. Specifically, we will explore how concepts from discrete and tropical geometry can be utilized to describe the geometric complexity of neural networks. No particular prior knowledge of geometry is required.

From Neural Networks to Graph Neural Networks

This seminar introduces the transition from traditional neural networks to Graph Neural Networks (GNNs), highlighting the limitations of MLPs in modeling relational data. It presents graphs as a natural representation for such data and describes GNNs through the message passing paradigm. Key architectures, challenges, and current research directions, including spatio-temporal graph learning, are briefly discussed.

Sheaf Neural Networks

This seminar provides an accessible introduction to the mathematical concept of sheaves. We will focus in particular on sheaves defined on graphs, explaining their intuition and basic properties in a simple and approachable way. Building on this foundation, we will introduce Sheaf Neural Networks, a recent extension of Graph Neural Networks that uses sheaf structures.